AI content isn’t going anywhere, but the way we evaluate it is changing fast. This guide breaks down what an AI Content Detector actually does, how these systems work under the hood, and where their accuracy holds up (and where it doesn’t). It walks through real-world testing insights, compares leading tools for schools, agencies, and enterprises, and explains how AI detection differs from plagiarism checks. You’ll also see how integrations, APIs, and workflow decisions matter just as much as raw accuracy. Whether you’re reviewing student submissions, managing content teams, or protecting brand credibility, this blog gives you the context needed to use AI detection tools responsibly, not blindly.

Table of Contents

What Is an AI Content Detector?

AI content detectors went from “nice-to-have” tools to essential infrastructure in less than two years. Schools use them to review assignments. Agencies use them to vet outsourced blog posts. Enterprises use them to protect brand credibility.

At its core, an AI content detector is software designed to analyze text and estimate whether it was written by a human or generated by a large language model.

Sounds simple. It’s not.

AI Content Detector Meaning

An AI content detector is a tool that scans written text and assigns a probability score indicating whether the content is AI-generated, human-written, or a mix of both.

It doesn’t “know” who wrote something. It evaluates patterns.

Most AI writing detection tools analyze:

- Sentence structure consistency

- Word predictability

- Token probability patterns

- Statistical fluency

- Repetition and phrasing rhythm

Large language models tend to generate text that is highly coherent, statistically smooth, and predictably structured. Human writing? It’s messier. More uneven. Sometimes brilliant. Sometimes clumsy. Often inconsistent in tone or complexity.

That contrast is what AI-generated vs human-written content detection relies on.

But here’s the nuance: modern AI models are trained on human text. So the line between “AI style” and “human style” keeps getting thinner. Detection tools are constantly trying to catch up.

In practical terms, an AI content detector usually produces:

- An overall AI probability score

- A sentence-level breakdown (in some tools)

- A confidence rating

- Sometimes, a color-coded highlight of flagged sections

The result isn’t a verdict. It’s an estimate.

And that distinction matters.

Why AI Content Detection Matters

Two years ago, AI-generated content was an experiment. Now it’s everywhere.

AI Content in Education

Students use AI to brainstorm, draft essays, summarize research, and even solve coding assignments. Some use it responsibly. Others don’t.

Institutions are under pressure to:

- Maintain academic integrity

- Distinguish assistance from authorship

- Prevent fully AI-written submissions

Without detection tools, enforcement becomes guesswork.

AI-Generated SEO Content

In marketing and publishing, AI has scaled content production dramatically. Entire blogs, landing pages, and product descriptions can be generated in minutes.

The challenge isn’t just volume; it’s authenticity.

Brands worry about:

- Publishing low-quality AI text

- Losing trust with readers

- Diluting expertise signals

- Publishing content that feels generic

AI content detection helps editorial teams review outsourced or AI-assisted content before it goes live.

Not to ban AI. To manage it.

Academic Integrity & Plagiarism Concerns

AI detection and plagiarism detection often get lumped together, but they solve different problems.

Plagiarism checks look for copied material.

AI detection looks for machine-generated patterns.

In universities and certification programs, both are becoming part of submission workflows. It’s not just about catching cheating; it’s about preserving the credibility of degrees and research output.

Google AI Overviews and Content Authenticity

With AI-generated summaries appearing directly in search results, the pressure on publishers has increased. Original reporting, firsthand insight, and experience-based content matter more than ever.

Whether or not search engines penalize AI-generated content directly, low-effort AI text doesn’t perform well long-term. Detection tools give teams an internal checkpoint before publishing.

In short, AI content detection isn’t about fear. It’s about control.

How Does an AI Content Detector Work?

This is where things get technical, but it’s worth understanding.

AI detectors don’t have a secret database of “AI sentences.” They rely on statistical analysis and machine learning models trained to recognize patterns typical of large language models.

AI Detection Algorithms Explained

Most AI detection systems are built on machine learning models trained on two datasets:

- Human-written text

- AI-generated text

The model learns to distinguish between the statistical fingerprints of each.

Here’s what that usually involves.

Machine Learning Models

Detection systems use classification models. These models don’t generate text; they evaluate it.

Given a piece of writing, the model calculates the probability that it belongs to the “AI-generated” class versus the “human-written” class.

It’s pattern recognition at scale.

Perplexity and Burstiness Metrics

Two terms show up frequently in AI writing detection:

- Perplexity

- Burstiness

Perplexity measures how predictable a piece of text is. AI-generated content tends to have lower perplexity because it follows statistically likely word sequences.

Human writing often includes surprising word choices, uneven rhythm, or unconventional phrasing, which increases perplexity.

Burstiness refers to variation in sentence length and complexity. Humans tend to write in bursts; short sentences followed by longer ones. AI models often produce more uniform sentence structures.

Detection tools analyze these signals across the entire document.

Token Probability Patterns

Large language models generate text token by token, selecting the most statistically probable next word.

Over long passages, this creates subtle patterns:

- Even tone distribution

- Predictable transitions

- Balanced sentence flow

AI detectors reverse-engineer those probabilities. They estimate whether the text aligns with how an AI model would likely generate it.

It’s not mind-reading. It’s probability math.

Transformer-Based Detection Systems

Some advanced detectors use transformer architectures similar to the models they’re evaluating. Instead of generating content, these systems classify it.

They look at:

- Contextual embeddings

- Sequence coherence

- Deep semantic consistency

This makes detection more nuanced, especially for longer documents.

Training Data Behind AI Writing Detection

An AI detector is only as strong as the data it’s trained on.

To identify GPT model detection patterns, for example, systems are trained on:

- Outputs from specific language models

- Human-written essays

- Editorial content

- Academic papers

- Mixed AI-human samples

Over time, they learn to recognize large language model pattern recognition signals.

But here’s the catch: as AI models evolve, detection models must retrain. GPT-4 writes differently from earlier versions. Newer models reduce repetition and improve stylistic variation.

That means detection accuracy is a moving target.

Some platforms also claim source-backed detection logic, meaning they combine statistical modeling with traceable data references or layered verification systems. This can improve transparency, though it still remains probabilistic.

Can AI Detectors Accurately Detect ChatGPT Content?

This is the question everyone asks.

Short answer: sometimes.

AI detectors can identify many fully AI-generated passages with reasonable accuracy. Especially if the text hasn’t been edited.

But challenges remain.

ChatGPT Detection Challenges

- Edited AI text becomes harder to detect

- Mixed human-AI content blurs patterns

- Highly structured academic writing can look “AI-like.”

- Non-native English writing may trigger false positives

The cleaner and more predictable the writing, the more likely it may be flagged, even if it’s human-written.

False Positives vs False Negatives

- False positive: Human text flagged as AI

- False negative: AI text labeled as human

Both happen.

In academic settings, false positives are particularly sensitive. Accusing someone of using AI incorrectly can damage trust.

That’s why many institutions use AI detection as a signal, not final proof.

Why AI Detection Is Probabilistic (Not 100%)

AI content detection is fundamentally probabilistic because it’s based on statistical similarity, not authorship verification.

No detector can see intent.

No detector can confirm who typed the words.

It can only evaluate patterns.

That’s why most reliable tools provide:

- A probability percentage

- A confidence score

- Contextual breakdowns

Used properly, AI content detectors are powerful review tools.

Used blindly, they’re risky.

Understanding how they work, and where they fall short, is what separates responsible implementation from overreliance.

10 Best AI Content Detectors

The AI content detector market has matured quickly. What started as basic probability scanners has turned into a competitive space with academic integrations, enterprise APIs, Chrome extensions, and multi-language support.

Some tools are built for schools. Others for publishers. A few are clearly enterprise-first. The right choice depends less on hype and more on use case.

Below is a practical breakdown of the 10 best AI content detectors in 2026, where they shine, where they fall short, and who they’re really built for.

1. Originality.ai – Best AI Content Detector for SEO and Publishers

Originality.ai has positioned itself strongly in the publishing and content marketing space. It’s known for combining AI detection with plagiarism scanning in a single workflow.

What stands out:

- Strong AI detection accuracy across long-form content

- GPT-4 detection capabilities

- Combined plagiarism + AI scan

- Team management and audit logs

- API access for scaled workflows

For agencies and content-heavy publishers, the combined AI and plagiarism check saves time. Instead of juggling two tools, everything runs in one dashboard.

It’s not the cheapest option, but it’s built for teams that care about accountability and content quality at scale.

2. GPTZero – Best AI Detector for Students and Educators

GPTZero became popular early in the AI detection wave, especially in academic environments.

Its strengths are straightforward:

- Sentence-level AI detection

- Probability breakdowns per paragraph

- Classroom-friendly interface

- LMS integrations

Educators appreciate the transparency. Instead of just saying “AI detected,” it highlights which sections triggered the score.

It’s particularly useful for shorter academic essays. For heavily edited or hybrid AI-human content, results can be mixed, but in classrooms, it remains widely used.

3. Copyleaks AI Content Detector – Enterprise-Grade AI Detection

Copyleaks approaches AI detection with an enterprise mindset. It’s not just a web tool; it’s infrastructure.

Key features:

- LMS integrations

- Chrome extension

- Multi-language AI detection

- API for bulk scanning

- Source-backed AI probability reports

It supports multiple languages better than many competitors, which matters for international institutions and global companies.

If you’re managing large volumes of submissions or documents, Copyleaks feels engineered for that level of scale.

4. Turnitin AI Detection – Academic AI Content Detection

Turnitin is already deeply embedded in universities worldwide. Its AI detection feature builds on that foundation.

What makes it different:

- Direct integration into university submission systems

- AI + plagiarism cross-checking

- Institutional compliance workflows

Because it’s part of the existing academic infrastructure, adoption is frictionless for universities already using it.

However, it’s not designed for marketers or publishers. This is squarely an academic solution.

5. Winston AI – AI Content Detector for Publishers

Winston AI is geared toward content creators and publishers.

Notable features include:

- OCR scanning (useful for scanned documents or screenshots)

- AI probability scoring

- Google Docs compatibility

- Clean, easy-to-read reports

The OCR capability is underrated. In some editorial environments, content isn’t always submitted as clean text files.

For digital publishers reviewing freelance work, Winston AI offers a practical balance between simplicity and functionality.

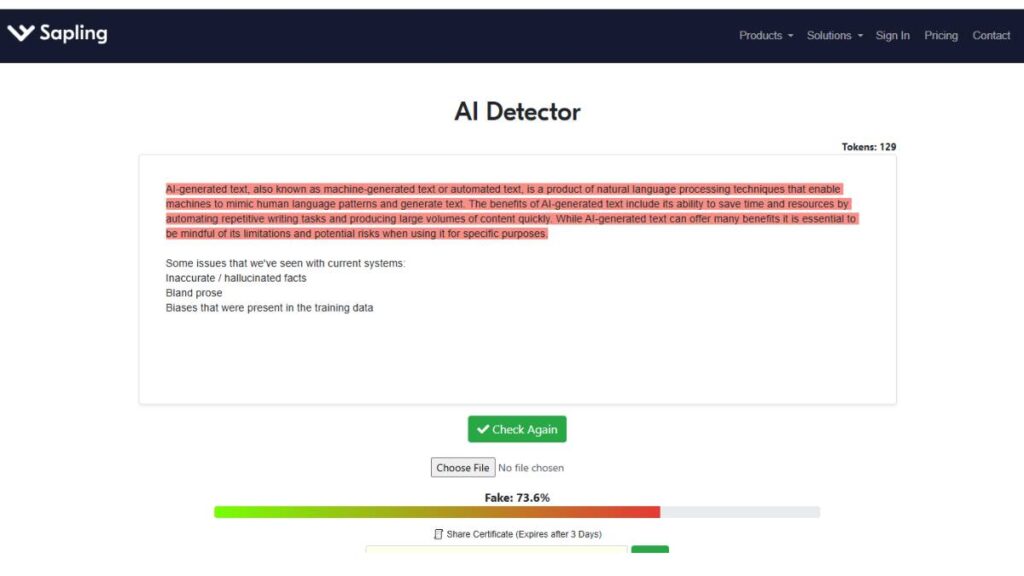

6. Sapling AI Detector – Free AI Content Detection Tool

Sapling AI Detector is one of the more accessible options.

Highlights:

- Instant AI score

- Free usage tier

- Quick, no-friction scanning

It’s useful for fast checks, especially for short business communication or quick drafts.

That said, free AI detectors often come with limitations:

- Less granular reporting

- Fewer integrations

- Lower consistency on longer documents

For casual use, it works. For high-stakes academic or enterprise decisions, it may not be robust enough.

7. Writer.com AI Content Detector – Enterprise AI Checker

Writer.com approaches AI detection through a brand governance lens.

Key capabilities:

- API integration

- Enterprise-grade security

- Compliance workflows

- Brand voice monitoring

This isn’t just about detecting AI content. It’s about ensuring enterprise teams follow internal guidelines, tone, terminology, and compliance standards.

For companies managing distributed content teams, this broader governance angle is valuable.

8. Crossplag AI Detector – AI + Plagiarism Hybrid Tool

Crossplag blends plagiarism detection with AI identification.

What to expect:

- Dual AI + plagiarism scanning

- Academic-focused use cases

- Transparent detection reporting

It appeals to institutions that want layered verification, checking for copied content and AI-generated patterns in one scan.

Accuracy is solid, though like many hybrid tools, its AI detection may not be as aggressive as AI-only competitors.

9. ZeroGPT – Free AI Content Detector for Quick Checks

ZeroGPT markets itself as a free AI content detector with instant results.

It’s popular because:

- No sign-up required

- Immediate AI probability score

- Simple interface

However, reliability concerns come up frequently in discussions around it.

Free tools often prioritize speed over deep statistical analysis. For quick screening, it’s convenient. For formal academic review or enterprise compliance, caution is advised.

10. Pangram Labs AI Detector – Research-Based AI Detection Model

Pangram Labs positions itself as a research-driven AI detection platform.

Key elements:

- Emphasis on model training transparency

- Focus on academic integrity

- Comparison is often made with GPTZero

- Ongoing model refinement

Its strength lies in a more academic, research-oriented approach to detection logic.

Compared with GPTZero, Pangram tends to focus more heavily on technical detection modeling rather than classroom interface design.

Choosing the Right AI Content Detector

Before picking a tool, clarify the stakes:

- Reviewing student essays? Prioritize LMS integration and transparency.

- Vetting freelance blog content? Look for a strong long-form detection and plagiarism combination.

- Managing enterprise compliance? API access and security controls matter most.

- Running quick checks? A free tool might be enough; just understand its limits.

AI content detection is less about finding a perfect system and more about building a reliable review process. The strongest setups combine:

- AI probability scoring

- Plagiarism scanning

- Editorial oversight

- Clear documentation

The tools above each solve part of that equation. The right choice depends on where accuracy, scale, and workflow matter most in your organization.

Enroll Now: Advanced Digital Marketing Course

How to Use an AI Content Detector (Step-by-Step Guide)

Using an AI content detector isn’t complicated. But using it well? That’s different.

Too many teams treat it like a lie detector. Paste text. Get score. Accept the verdict. Move on.

That’s not how it should work.

An AI content detector is a review layer, not a judge. Here’s how to approach it properly.

Step 1: Add Your Content

Most AI content detectors allow you to:

- Paste text directly into a dashboard

- Upload a document (Word, PDF, etc.)

- Connect through Google Docs integration

- Use an API for bulk submission

If you’re reviewing short-form content, emails, essays, or blog drafts, pasting text works fine.

For longer editorial workflows, document upload or Google Docs integration is cleaner. It preserves formatting and makes collaboration easier.

For enterprises? API integration is usually the smarter route. That way, detection runs in the background without interrupting the workflow.

The key here is simple: scan the final version, not an early draft. Partial drafts can trigger misleading signals.

Step 2: Scan Your Text for AI Detection

Once submitted, the system runs its analysis and generates a report. Most tools provide:

- An overall AI probability score

- Sentence or paragraph-level highlights

- A confidence percentage

Don’t obsess over a single number. Context matters.

A 60% AI probability in a technical whitepaper doesn’t mean it’s machine-written. Highly structured writing can look statistically “clean.” That’s normal.

Look for patterns instead:

- Entire sections flagged heavily

- Repetitive sentence structures

- Overly consistent tone across long passages

That’s where detection becomes useful, as a pattern spotter.

Step 3: Review the AI Probability Score

This is where nuance matters.

An AI probability score is not a verdict. It’s a statistical likelihood.

If the score is extremely high (for example, 90%+), it suggests the content strongly resembles AI-generated patterns. That warrants closer inspection.

If it’s in the middle range, say 40–70%, the situation is less clear. This is often where mixed AI-human writing lands.

Instead of asking, “Is this AI?” ask better questions:

- Does the writing show real insight?

- Are there original examples or analysis?

- Is the tone too uniform?

- Does it feel overly generalized?

AI detection should support editorial judgment, not replace it.

Step 4: Refine AI-Flagged Content

If content is flagged and you need to lower the AI detection score, the solution isn’t random word swaps. That rarely works long-term.

Instead, focus on depth and specificity.

To reduce the AI detection score naturally:

- Add original examples or data points

- Include nuanced opinions or trade-offs

- Break predictable sentence patterns

- Vary the rhythm intentionally

- Replace generic phrasing with precise language

Editing AI-generated text isn’t about “tricking” detectors. It’s about improving quality.

Strong human writing tends to include:

- Slight asymmetry

- Occasional imperfection

- Clear point of view

- Context that reflects experience

When content moves from generic to thoughtful, detection scores often shift as a byproduct.

Making AI content more human isn’t cosmetic. It’s structural.

AI Content Detector for Different Use Cases

Not every AI content detector is built for the same environment. A university’s needs look very different from a marketing agency’s.

Understanding the use case changes everything.

AI Content Detector for Academia

In academic settings, the primary goal is preserving integrity.

Schools use AI content detectors to:

- Detect AI-generated essays

- Review research submissions

- Support academic integrity investigations

- Integrate directly with LMS platforms

The workflow usually looks like this: student submits → system scans → report generates → educator reviews.

Important distinction: most institutions treat AI detection as a signal, not definitive proof.

Because false positives happen. Especially with highly structured academic writing.

That’s why many schools combine:

- AI detection

- Plagiarism checks

- Writing a history review

- Instructor judgment

AI detection is one layer in a broader academic integrity framework.

AI Content Detector for SEO Experts

For SEO professionals and content agencies, the concern isn’t catching students. It’s protecting brand authority.

Common use cases include:

- Detecting outsourced AI-written content

- Reviewing freelance submissions

- Verifying client deliverables

- Maintaining quality standards

Many agencies don’t ban AI. They manage it.

The goal is to ensure that content isn’t generic, templated, or overly synthetic. Detection tools help identify when drafts need more human insight.

It’s less about punishment. More about performance and credibility.

AI Detector for Schools & Universities

At scale, schools need workflow integration.

The best AI tools for schools offer:

- LMS AI detector integration

- Automated originality reports

- Clear, explainable probability scores

- Administrative dashboards

Teacher workflow integration is critical. If detection tools create friction, they won’t get used consistently.

In higher education, especially, transparency matters. Students often want to understand why something was flagged. Tools that provide sentence-level breakdowns are better suited for this environment.

Enterprise AI Detection via API

In enterprise environments, volume changes the equation.

Companies may need:

- Scalable AI detection

- Bulk scanning thousands of documents

- API-based automation

- Security and compliance controls

Manual scanning doesn’t scale in large organizations. API integration allows detection to run quietly in the background, flagging risk without disrupting workflow.

Security becomes a priority here. Enterprises want clarity on:

- Data storage

- Encryption standards

- Access control

- Audit trails

AI detection at the enterprise level isn’t about catching content after the fact. It’s about embedding review into the system itself.

AI Content Detector Chrome Extension & Integrations

Standalone dashboards are useful. But real value comes from integration.

The more seamlessly AI detection fits into existing workflows, the more likely it is to be used correctly.

AI Detector Browser Extension

An AI content detector Chrome extension allows real-time scanning directly in the browser.

Benefits include:

- Instant AI probability checks

- Reviewing content inside CMS platforms

- Scanning web pages or drafts quickly

For editors and teachers, this saves time. There’s no need to copy and paste between platforms constantly.

That said, browser-based scanning is typically best for shorter content. Large documents often require full-platform scans for better accuracy.

Google Docs AI Detection

Google Docs integration is increasingly common.

Instead of exporting and uploading documents manually, detection runs inside the writing environment.

This streamlines:

- Editorial review

- Classroom submissions

- Collaborative editing

It also makes version comparison easier. Teams can review drafts before and after revisions without jumping between tools

LMS AI Detector Integration

In education, LMS integration is almost non-negotiable.

When AI detection connects directly to platforms like Canvas or Blackboard, it becomes part of the submission pipeline.

Students submit once. Detection runs automatically. Reports are attached to the assignment.

No extra steps. No friction.

That consistency is what makes detection manageable at scale.

Multi-Language AI Content Detection

As AI tools expand globally, multi-language detection has become essential.

Many early AI content detectors focused primarily on English. Now, institutions and businesses need support for:

- Spanish

- French

- German

- Portuguese

- And other widely used languages

Accuracy can vary significantly across languages. Some detectors are stronger in English because that’s where most training data exists.

If you operate internationally, test detection quality in your primary language before committing long-term.

AI content detection is no longer a niche tool. It’s becoming infrastructure; embedded into education systems, publishing workflows, and enterprise compliance frameworks.

The real question isn’t whether to use an AI content detector.

It’s how intentionally you implement it.

AI Content Detector Accuracy: Real-World Testing Insights

Accuracy is the question behind every AI content detector.

Everyone wants a number. A percentage. A clear winner.

But once you start testing these tools in real-world conditions, things get more nuanced.

Testing AI Detectors Against GPT-4 & Advanced LLMs

When testing AI content detectors against advanced language models, a few patterns tend to show up:

- Fully AI-generated, unedited content is usually flagged with high confidence.

- Lightly edited AI drafts fall into a gray zone.

- Heavily edited or hybrid AI-human content becomes difficult to classify.

- Highly structured human writing can trigger false positives.

The more predictable and uniform the text, the more likely it is to resemble machine output, regardless of who wrote it.

Short-form content also behaves differently from long-form content. A 200-word paragraph may not provide enough statistical signal for accurate classification. Longer essays, reports, or blog posts tend to produce more stable results.

So when evaluating accuracy, context matters:

- What type of content is being scanned?

- How long is the document?

- Is it purely AI-generated or edited?

- Is the writing technical, creative, or academic?

Accuracy isn’t a universal number. It shifts depending on these variables.

Which AI Content Detector Is Most Accurate?

There isn’t one universally “most accurate” AI content detector.

Some tools perform better on academic essays. Others are stronger on long-form marketing content. A few excel in multi-language detection.

In most independent comparisons, enterprise-grade tools tend to outperform free detectors. That’s not surprising; they invest more heavily in model training and continuous updates.

But even top-tier detectors can disagree with each other on the same document.

That’s why relying on a single scan result can be risky. Many institutions and agencies:

- Cross-check with a second detector

- Combine AI detection with plagiarism analysis

- Use human editorial review as the final step

The most accurate system is usually a layered one.

Limitations of AI Writing Detection Tools

AI writing detection tools have real limitations. Ignoring them leads to misuse.

Common limitations include:

- False positives on formal academic writing

- Difficulty detecting paraphrased AI text

- Lower accuracy in non-English languages

- Reduced reliability on short documents

AI models are improving at mimicking human variability. That makes detection harder over time.

There’s also a broader issue: writing styles overlap. Clear, structured writing can resemble AI output. That doesn’t make it machine-generated.

This is especially sensitive in education. Flagging a student incorrectly can damage trust and credibility.

AI detection should inform decisions, not dictate them.

Why No AI Detector Is 100% Reliable

AI detection is fundamentally probabilistic.

It works by identifying statistical similarities between a piece of writing and known AI-generated patterns. It does not verify authorship. It cannot confirm intent.

As language models evolve, they produce more varied and human-like outputs. Detection models must constantly adapt.

Even then:

- AI-human hybrid writing complicates classification.

- Edited AI content may evade detection.

- Highly polished human writing may look artificial.

Expecting 100% reliability misunderstands how these systems function.

The smarter approach is to treat AI content detection as part of a broader review framework, one that includes human oversight, contextual analysis, and documentation.

Security, Privacy & Compliance in AI Content Detection

Accuracy gets attention. Security should too.

When you upload content into an AI content detector, you’re potentially sharing sensitive material: academic submissions, internal documents, and client drafts.

That raises important questions.

Data Storage Policies

Before adopting any AI content detector, review its data storage practices.

Key questions to ask:

- Is uploaded content stored permanently or temporarily?

- Is it used to train detection models?

- Can users request deletion?

- Is data encrypted at rest?

In academic environments, student data privacy is critical. In enterprise settings, intellectual property protection is even more sensitive.

Transparency in data handling isn’t optional anymore. It’s expected.

Enterprise Security Controls

For organizations using AI detection at scale, security controls matter just as much as detection accuracy.

Look for:

- Role-based access control

- Audit logs

- Administrative dashboards

- SOC 2 or similar compliance standards

Enterprise AI detection should integrate into existing security frameworks, not create new vulnerabilities.

The more content you scan, the more important governance becomes.

API Security & Encryption

When using AI detection via API, security risks shift from the user interface to infrastructure.

Important considerations include:

- Secure API authentication

- Encrypted data transmission

- Rate limiting and monitoring

- Clear documentation of data retention

API-based detection is powerful because it scales. But scale without security creates exposure.

In regulated industries, such as education, healthcare, and finance, compliance requirements must be addressed upfront, not after adoption.

AI Content Detector vs Plagiarism Checker: What’s the Difference?

AI content detection and plagiarism detection are often confused. They solve different problems.

AI-Generated Text vs Copied Text

A plagiarism checker looks for duplicated content. It compares submitted text against existing sources: websites, academic papers, and databases, and flags matching passages.

An AI content detector does not look for copied material. It evaluates whether the writing resembles machine-generated output.

You can have:

- Original AI-generated content (not plagiarized).

- Human-written content that is plagiarized.

- AI-generated content that is also plagiarized.

They are separate risks.

When to Use Both Tools

In most academic and publishing workflows, the safest approach is to use both.

Plagiarism detection answers:

“Was this copied?”

AI content detection answers:

“Does this appear machine-generated?”

Together, they provide a more complete picture of content authenticity.

For example:

- Universities often combine AI detection and plagiarism scanning in submission systems.

- Agencies may run plagiarism checks to protect originality and AI detection to maintain quality standards.

One without the other leaves gaps.

Combined Detection Systems

Some platforms now offer combined detection systems, running AI probability scoring and plagiarism analysis in a single report.

This simplifies workflow and reduces tool-switching.

However, combined tools should still be evaluated carefully. The strength of their AI detection model may differ from specialized AI-only platforms.

The bigger point is this: content integrity is multi-layered.

AI detection helps identify machine patterns.

Plagiarism detection protects originality.

Human review ensures context and fairness.

When those three elements work together, the system becomes much more reliable than any single tool alone.

How to Prove Your Work Is Original

As AI content detection becomes more common, one question keeps coming up:

“How do I prove this is actually my work?”

Whether you’re a student, a freelancer, a content team, or a corporate communications lead, you need more than just confidence. You need documentation.

Here’s how to approach it strategically.

AI Writing Transparency

Transparency is quickly becoming the new standard.

That doesn’t mean you need to disclose every brainstorming tool or draft iteration. But if AI-assisted writing is part of your workflow, clarity matters.

Smart organizations are:

- Defining internal policies around AI-assisted content

- Documenting when AI tools are used for ideation vs drafting

- Differentiating between “AI-assisted” and “AI-generated”

In academic settings, some institutions now allow limited AI assistance but require disclosure. In marketing and publishing, clients increasingly expect clarity about how content is created.

The key is alignment. If expectations are clear upfront, disputes later become far less likely.

Version History & Documentation

One of the strongest defenses against a questionable AI detection result is version history.

Draft progression tells a story:

- Early outline

- Rough draft

- Edits and refinements

- Final submission

Tools that preserve timestamps and edit history provide a paper trail. That trail demonstrates human involvement in a way probability scores cannot.

For teams, maintaining:

- Shared document logs

- Editorial comments

- Revision tracking

adds another layer of protection.

If someone challenges the originality of a piece, showing how it evolved over time is far more persuasive than arguing over a percentage score.

Submission Reports & AI Confidence Scores

Many AI content detectors generate downloadable reports. These often include:

- Overall AI probability score

- Sentence-level analysis

- Highlighted sections flagged as AI-like

Instead of ignoring these reports, use them proactively.

If your content scans clean, save the report.

If it’s partially flagged, review and refine before submission.

If it’s heavily flagged but genuinely human-written, attach supporting documentation.

It’s important to understand the terminology too:

- An AI probability score usually represents how likely text resembles machine-generated patterns.

- AI confidence score reflects how strongly the system believes in its classification.

They’re not proof of authorship. They’re statistical signals.

Treat them as supporting evidence, not verdicts.

Conclusion: Which AI Content Detector Should You Use?

After everything covered in this guide, the honest answer is a bit less dramatic than most comparison pages suggest. There’s no universal winner. There’s only one fit.

Large publishers and enterprise teams usually gravitate toward platforms like Copyleaks or Originality.ai because they handle scale without breaking workflow. Schools stick with Turnitin or GPTZero since those systems are already wired into classrooms. Free tools such as ZeroGPT or Sapling? Fine for a quick temperature check. Just not something to stake a policy on.

Here’s the reality that doesn’t get said enough: these systems don’t “catch” AI. They model probability. Sometimes that probability is helpful. Sometimes it’s noisy.

The smarter move isn’t obsessing over which detector claims the highest accuracy. It’s building a layered process; AI detection alongside plagiarism checks, version history, and human review. Software can raise an eyebrow. People still make the call.

FAQs:

What is the best AI content detector in 2026?

“Best” usually depends on pressure. A university handling thousands of submissions will choose differently from a small content team doing spot checks. Enterprise platforms like Copyleaks or Originality.ai are built for scale and policy enforcement. Schools often stay with Turnitin or GPTZero because the infrastructure is already there. Context decides.

Are AI content detectors accurate?

They’re useful, but not definitive. Long, untouched AI drafts tend to get flagged confidently. Mixed or heavily edited writing? That’s where things get fuzzy. These systems estimate likelihood based on patterns. They don’t verify authorship. Anyone treating the score as a verdict is overestimating the tool.

Can AI detectors detect ChatGPT content?

Sometimes clearly, sometimes not at all. Clean outputs often show recognizable statistical patterns. Once the text is reshaped, rewritten, or layered with original thinking, detection becomes less predictable. These systems analyze structure and probability, not intent. That distinction matters more than people think.

Is AI detection the same as plagiarism detection?

Not even close. Plagiarism tools search for copied material from existing sources. AI detectors look for machine-like construction patterns. A piece can be completely original and still AI-generated. Or fully human-written and plagiarized. Two different problems, two different solutions.

Can AI content bypass AI detectors?

Bluntly, edited content can become harder to classify. When humans restructure, inject nuance, or shift voice, statistical patterns change. That doesn’t mean detectors fail; it means hybrid writing blurs lines. The real conversation should be about transparency and standards, not evasion tactics.

Which AI detector is best for schools?

Schools tend to favor systems that plug into learning platforms and generate structured reports. Turnitin and GPTZero fit that bill. But responsible institutions pair those results with faculty judgment. Detection alone shouldn’t decide outcomes, especially when academic integrity is on the line.

Which AI content detector is best for SEO agencies?

Agencies care about volume and workflow. Bulk scanning, API access, and combined plagiarism checks matter more than flashy dashboards. Tools like Originality.ai and Copyleaks are often selected for operational reasons. Consistency across dozens of writers becomes the priority.

Are free AI content detectors reliable?

They’re fine for quick checks. Not policy decisions. Free tools often rely on lighter models and fewer updates. Results can swing more dramatically. For low-risk scenarios, that’s acceptable. For compliance or grading? Probably not enough.

How to reduce AI detection score?

Instead of chasing the score, focus on depth. Add original reasoning. Vary sentence rhythm. Break predictable phrasing. AI writing tends to flow too smoothly; humans interrupt themselves, shift tone, and emphasize oddly. Real revision naturally lowers machine-like signals.

Does Google penalize AI-generated content detected by AI content detectors?

Search engines evaluate quality and usefulness, not the origin story of the text. AI-generated content isn’t automatically penalized. Thin, low-value content is. Detection tools don’t feed into search rankings directly. They operate in separate ecosystems.

Can AI content detectors detect paraphrased AI text?

Heavily paraphrased content can reduce detection accuracy. Some structural patterns still surface, especially in longer pieces. But classification becomes less certain. That’s why borderline scores are common in revised documents.

What is the difference between an AI probability score and an AI confidence score?

Probability estimates how likely text is to resemble machine output. Confidence reflects how certain the system feels about that estimate. Both are statistical interpretations. Neither confirms who actually wrote the content.

How accurate are AI content detectors for non-English languages?

Performance often drops outside English. Many systems were trained primarily on English datasets. Enterprise platforms are expanding multi-language support, but variability remains. Language structure and training data depth both influence outcomes.

Can AI content detectors detect content from GPT-4 or newer LLMs?

Most updated systems are trained to recognize outputs from advanced models. Still, as language models evolve, their outputs become less uniform. Detection adapts, but it’s a constant adjustment cycle.

Do AI content detectors store or reuse uploaded content?

Policies vary. Some platforms retain text temporarily; others offer strict deletion controls. Enterprise vendors usually provide clearer documentation around data handling. Reviewing those policies before uploading sensitive content is simply good practice.

Which AI content detector offers the best API for enterprises?

Enterprises typically look for secure authentication, detailed documentation, and scalable pricing. Copyleaks and Writer.com are often positioned for API-driven environments. The best fit depends on internal infrastructure and compliance needs.

Can students appeal an AI detection result?

In many institutions, yes. Since results are probabilistic, appeals and human review processes are increasingly common. Draft history and documented revisions can strengthen those discussions.

Are AI content detector Chrome extensions reliable for real-time scanning?

They’re convenient. That’s their strength. But they usually provide lighter analysis than full platform reports. For serious evaluations, a complete scan through the main system is more dependable.

How do AI content detectors measure perplexity and burstiness in writing?

Perplexity gauges how predictable each word sequence is based on language models. Lower unpredictability often signals machine-like structure. Burstiness looks at variation; changes in sentence length and rhythm. Human writing tends to fluctuate. Machines, at least historically, smooth things out.